Last year, I wrote a blog about the performance of various NFS Solutions in AWS. Earlier this year, Amazon announced its own NFS Solution called Elastic File System, “EFS”. I wanted to test EFS the same way and measure its performance compared to the other NFS Solutions I had tested and used in the past. These tests hopefully are helpful for AWS managed services providers looking to optimize their disk performance.

The Contenders

GlusterFS

GlusterFS is a simple-to-use NFS service we have utilized successfully for numerous projects. Overall, Gluster provides excellent reliability but slows down occasionally when dealing with large numbers of files in a single directory, 10,000+, or with large files, 100MB+.

For this test, Gluster will be running on two t2.small servers. Each server will have a 100GB General Purpose SSD EBS volumes for data. The file system will be mounted with GlusterFS.

AWS EFS

To utilize EFS, login into the AWS Command Console and create a file system that can be mounted on multiple EC2 Instances (allowing simultaneous access). Provide the EFS with a VPC ID and a name.

When using EFS, there is nothing to configure, which increases the simplicity factor. I will be mounting the file system with NFS4.

SoftNAS Cloud

SoftNAS Cloud is a software solution that runs on an EC2 Instance. It is configured very close to traditional NAS Hardware, except it uses EBS Volumes and S3 instead of Hard Drives.

SoftNAS Cloud will be running on an m3.medium instance with two 100GB General Purpose SSD drive in a RAID0. The file system will be mounted with NFS4. We also enabled Pre-Warm for the volumes and read cache on the Ephemeral SSD.

The Test

We will be running the test from a t2.small instance. We will run the command ‘iozone -T -t16 -r64k -s<Size> -S20480 -I -i0 -i1 -i2’. Size will be changed to four different sizes: 512k, 2m ,8m, and 512m.

- -i0 - write/read tests

- -i1 - rewrite/reread tests

- -i2 - random write/random read tests

- -T - posix threads.

- -t16 - The number of threads to run.

- -r64k - Record lengtio.

- -s512m - file size.

- -S20480 - Cache Size

- -I - direct IO if possible. (Avoid disk cache.)

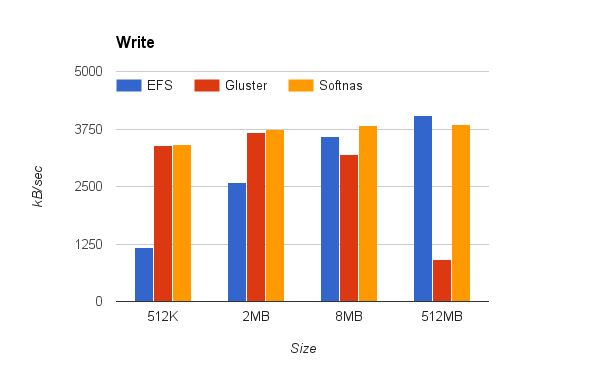

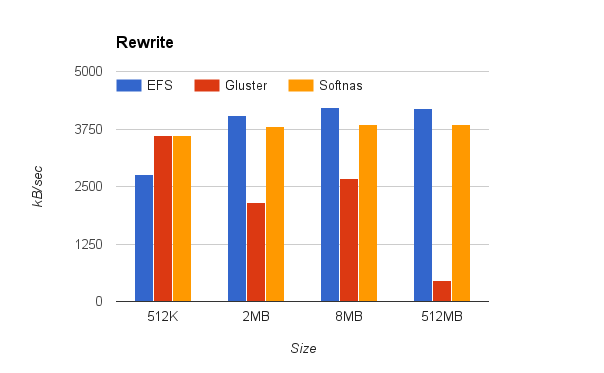

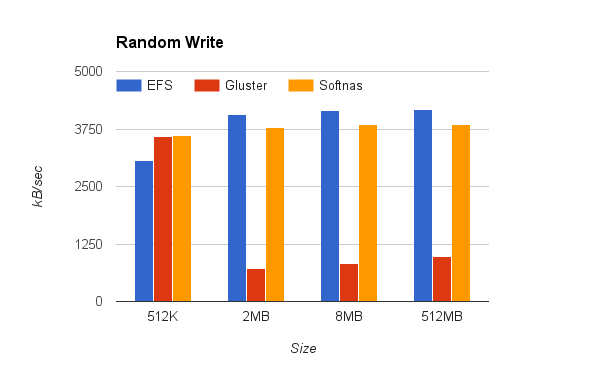

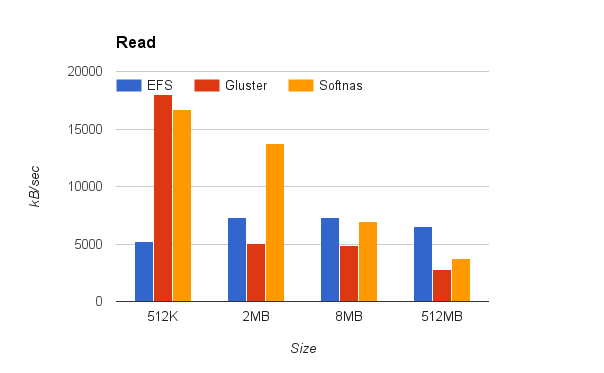

Each test is performed against sixteen files simultaneously. The data presented below uses the average kBps.

The Data

Write

ReWrite

Random Write

Read

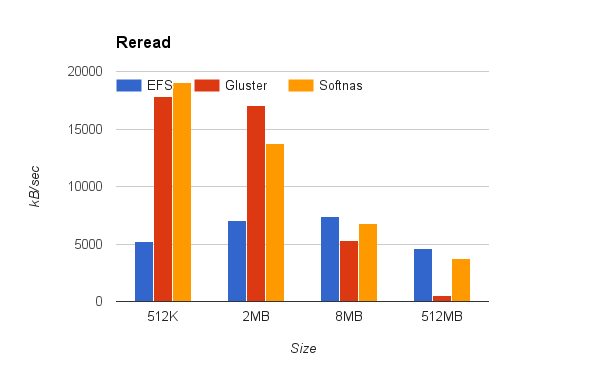

Reread

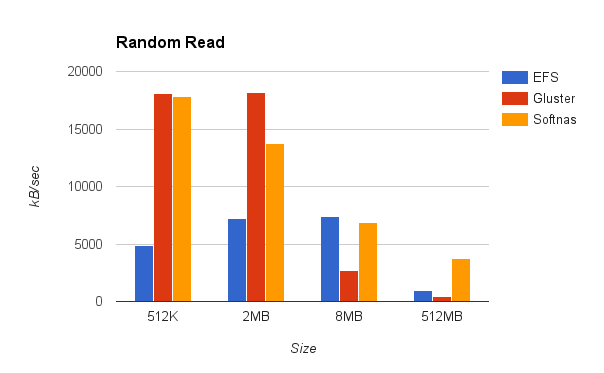

Random Read

The Results

Gluster had great read speed with small files because of built-in caching, but dropped off when the files got larger than the cache limit.

EFS had good consistent speed across the test.

SoftNAS Cloud had excellent read and write speed through all the sizes. The high throughput for reading was largely because SoftNAS Cloud uses Ephemeral Storage as a read cache.

At Scale:

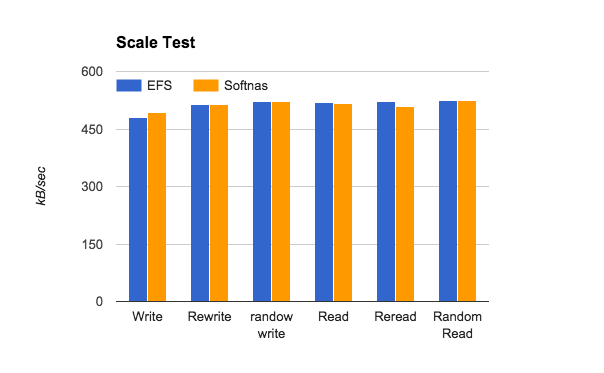

The data above gives you a good idea of a base throughput for these systems, but I wanted to try a larger scale test.

I increased the test instance size to an m3.xlarge and ran iozone for a 2MB file (our average size) and increased the number of threads to 256.

I left all EFS and SoftNAS Cloud settings the same.

This shows that both EFS and SoftNAS Cloud perform well at scale.

Price:

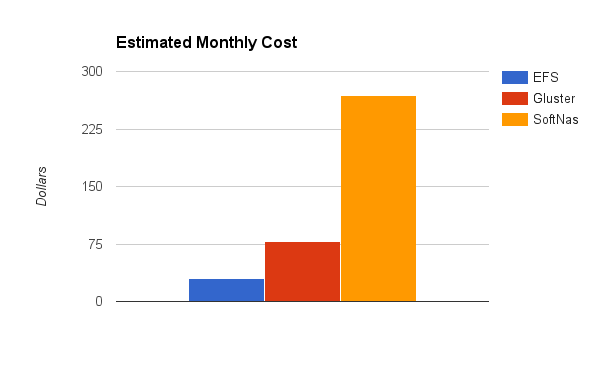

This time (not mentioned in the last blog post) I thought I would include a breakdown of cost for each solution. Costs are shown for a month of 24x7 usage.

Gluster

| Item |

Price |

| t2.small * 2 |

$58 |

| 100GB General SSD EBS * 2 |

$20 |

| Total |

$78 |

EFS

| Item |

Price |

| EFS Storage 100GB |

$30 |

| Total |

$30 |

SoftNAS

| Item |

Price |

| m3.medium with SoftNAS License * 2 |

$249 |

| 100GB General SSD EBS * 4 |

$40 |

| Total |

$289 |

Note: SoftNAS has published a blog providing a detailed pricing comparison between these options.

EFS is priced for only what you use. This gives it a very low cost to entry.

With Gluster and SoftNAS Cloud, the majority of the cost is in the EC2 instances. This means two things.

-

Since EBS is $.10 per GB ($.20 in a High Availability Setup) for General Purpose SSD that means it grows at 2/3rd the rate of EFS. At a certain point, it will become cheaper.

- These prices are without reservations. If you were to reserve the EC2 Instance you can save considerably on that expense.

Conclusion:

So what conclusion do I draw from all of this data?

I think EFS will start to replace our use of Gluster in the AWS environments but for clients outside of AWS, Gluster is a useful tool.

EFS is extremely fast and easy to setup. It had consistent and reliable performance. Once it is released this will probably become the default for most of our clients.

SoftNAS Cloud had by far the best performance. Its cost is higher for low capacities, but will be important when latency is paramount. At higher capacities the benefits of SoftNAS and lower overall cost are a clear winner, I would consider SoftNAS Cloud.