Shortly after I wrote AWS NAS Test I was contacted by Zadara Storage. They had some concerns about the data I reported from my test.

- Zandara believed the scale was off because of the instance sizes I used shouldn’t be able to reach the those speeds.

- They expressed concerns about the testing tool IOZone.

- They wanted to make sure that caching wasn’t causing a difference in the results.

After they reached out to me, we agreed to meet and discuss these concerns resulting in a few action items. First, I was going to re-examine the data do make sure the scale was correctly stated. Second, I asked them to run their own tests with IOZone and send me the parameters they used and I would re-run the tests. Lastly, I re-evaluated the setups to minimize (or get rid of) caching.

Looking at the original data, I discovered I did not divide by enough to get change the scale to MB per second so the scale was indeed all KB per second. However, the scale was the same between all of the tests, and I believe my original findings were accurate, but the difference between was not as great as I originally thought. .

When Zadara Storage got back to me with their test data, this is a copy of what was sent: “iozone -i0 -i1 -T -t16 -r64k -s512m -S 20480 -I -R -b /home/ec2-user/iozone5.xls”

-i0 - write/read tests

-i1 - rewrite/reread tests

-T - posix threads.

-t16 - The number of threads to run.

-r64k - Record lengtio.

-s512m - file size.

-S20480 - Cache Size

-I - direct IO if possible. (Avoid disk cache.)

-R - generate excel report

-b - file location.

The test variables all seemed reasonable to me, but I made a few changes:

I removed the -R: Because I didn’t want an excel report.

I removed the -b: Because I wanted to run the test from the file directory.

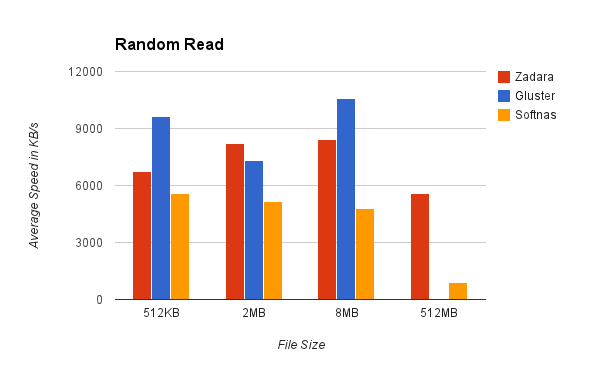

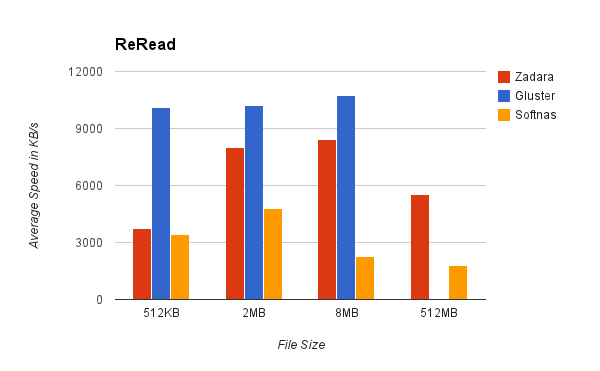

I added a -i2: So I could see the random write and random read.

512MB was also outside of our primary use case, so I decided to run the test against 4 different file sizes 512KB, 2MB, 8MB, and 512MB.

The final command looked something like this: “iozone -T -t16 -r64k -s -S20480 -I -i0 -i1 -i2

The Data

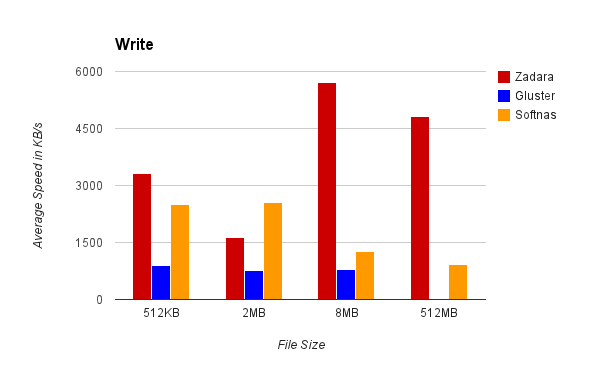

Write:

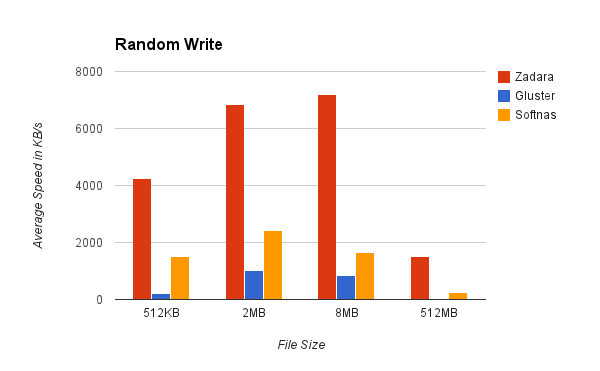

random write:

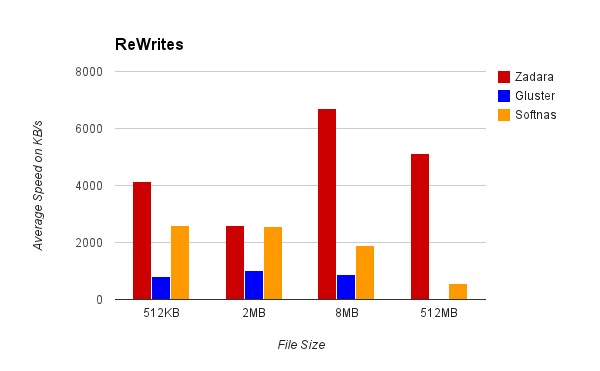

rewrite:

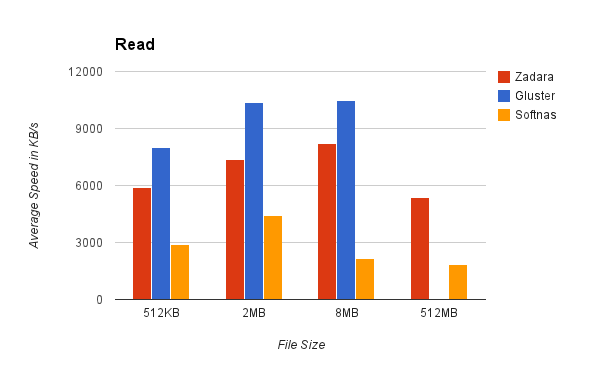

read:

random read

reread

Results

Gluster: Has a default value for cache that I wasn’t aware of, which is why its read values are so awesome here. But when we got to 512MB files, it spent 20 minutes running the first test before I stopped it… at that point I consider it a failure.

Zadara Storage performed much better when compared to the other contenders (better than I initially assumed). I do note, the speed for the “write” and “re-write” test doesn’t seem to fit the curve for the rest of the graphs. To me, that points to a bottleneck happening some where. I looked over the ec2 and the Zadara monitoring and no metrics were peeking.

This is hardly a large statistic of data to draw conclusions, but something different happened for those tests. Unfortunately, I had insufficient data to locate the problem.

SoftNAS: The overall graph pattern was consistent for what I saw in the last test, the average this time was slightly lower than the previous time. Looking into the data provided, I noticed that the maximum and minimum speeds had a higher range than that of Zadara. No resource was peeking on the servers, so I believe this to be an inconsistency in IOPS that come with a basic EBS volume.

Conclusion

Gluster once again is easy to setup and use, but with large file sizes is not an option.

Zadara out of the box setup is much more consistent than either of the two options I looked at. This was largely because you are renting designated drives.

SoftNAS showed that it is easy to setup, but the inconsistent IOPS with a basic EBS volume made the average speed slower.

You might be able to get SoftNAS or Gluster to get better performance than we saw by preserving IOPS or building a different RAID array, but that would set off a NAS arms race. In the future, I might run a cost analysis as an added metric to see who can build the best system for $X dollars a month.

With a basic setup, I believe it’s clear that the winner for this batch of tests is Zadara Storage, with the caveat that they aren’t available in all regions.