When Metal Toad announced the theme of the winter Hackathon would be Augmented Reality (AR) and Virtual Reality (VR), those of us who have focused our careers on building websites faced a conundrum: how can we learn the principles of AR/VR in just two days without getting sidetracked by the need to learn mobile app development—with its own languages and IDEs and troubleshooting gotchas—at the same time?

My team’s solution was to try A-Frame. A-Frame is an open source VR framework that enables development of VR applications right in the web browser using familiar HTML, JavaScript, and CSS. Although A-Frame’s capabilities are centered on VR rather than AR, there’s an additional AR library (developed by Jerome Etienne), that would help us create an AR project.

The team (Brad Kouchi, Toby Craig, Chris Hanson, and myself, Kalina Wilson) decided to build a simple treasure hunt in order to get familiar with the basic principles of AR. This would expose us to A-Frame and AR.js. As Brad pointed out, “A-Frame is something all web developers can easily play with to experiment within the traditional restrictions of a browser window.” We were excited to dive in!

The game

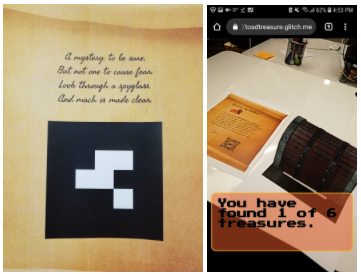

Instead of building an app, we decided to use a simple QR code as the entrance to the treasure hunt. This made the game accessible, as Toby noted: “I’m impressed with how seamlessly any user with a smartphone could snap a QR code and be brought into an AR environment, with nothing needing to be installed.” Once a user starts the treasure hunt, they uncover AR clues that lead them to successive clues, with different AR markers appearing at each stage. This allowed us to focus not just on using the technology, but on finding meaningful ways to tell a story with it. Toby said, “I had a lot of fun with the story and special effects that used the technology, which made it much more interesting than a bland 'hello world' kind of demo.”

Collaboration

We started by fiddling with local code, but then transitioned to using glitch.com, a browser-based code editor that allows on-the-fly collaboration. While it’s only appropriate for a certain level of structural complexity, we found it a good fit for our needs as a small team working fast to build a web-based project. Team members created individual files for testing implementation of a feature, then copied that code into the shared home page once it was functioning. We could see each other’s changes in real time, and performance was good. We found it to be a very useful team tool; as Brad said, “Glitch is great for collaboration. I definitely see myself relying on it more in the future.”

Technologies

Even though we were building an AR project, the bulk of our work wasn’t actually in A-Frame or AR.js. Those were blessedly straightforward to use, and we were guided well by examples we found online (especially this one from Women Who Code).

Our first hurdle was understanding how to create a “marker.” A marker is a simple pattern that can be recognized by ARToolkit, which is a library used by AR.js. In AR.js, you can tie recognition of the marker with a specific event, including appearance of a 3D model. We generated quite a few markers, but found some of them didn’t work as well as others. In the end, we chose to use barcode markers as they were reliable and quickly recognized.

We downloaded a free frog model from TurboSquid, but it wasn’t showing up when we tied it to a marker. Using Blender, we discovered the problem was the scale of the model: it was so large, our camera was inside the marker. We were *inside* the toad!

AR as storytelling

While we did spend time on learning some new technologies, we were struck by how much of working with AR had nothing to do with the AR technology itself. The bulk of our project became about designing the story and then communicating it effectively. We storyboarded how the user would move through the steps in the treasure hunt, what the “happy path” would entail, potential setbacks or error states, and how to add fun to the hunt. We developed rhymes and imagery to spice up the story and even selected fun fonts, creating a pirate adventure console game feel.

Chris noted that, “I was really happy with how focused we stayed on the goals of our project and the balance we had between attempting to implement a fussy feature and compromising if we were spinning too long on something. I’m really glad that we had the time to develop the ‘story’ along with the tech.”

We also had to consider storytelling in another way: how to present our game during judging. We decided to take a video from a mobile phone to demonstrate the happy path, and used iMovie to add imagery and a sea shanty soundtrack to the video. We had to edit all kinds of media files, which exposed us to learning how to use the various editors for those types of files.

The takeaways

Since we usually work in the context of websites, designing a game, especially as a team, was unfamiliar to most of us. But we discovered that even a seemingly daunting technical challenge is fun and more intuitive when you focus not just on the what, but the why: what is meaningful or interesting about what we’re doing with the technology. We thought about different paths through the game, attempted to storyboard, and then added fun through every means possible—references to old console games, pirate-themed poetry, sea shanties.

After completing this project, we felt excited about AR and storyboarding for AR, but had also left several concerns unaddressed. We’d been unable to keep a “particle generator” in our project (to create a flow of stars upon opening the final treasure chest) because it caused a lot of lag. We also never identified exactly why some of our markers weren’t working. But we certainly got a foothold in the technology, and in the end created a game that worked and was fun to play.

In the end, what I took home about AR is that it is a time-based art. We’re not just using three dimensions; we were actually using four-dimensional concepts. This really opens up the world of AR from a conceptual standpoint, and gets me excited about diving deeper into the technology to create four-dimensional experiences that make rewarding stories for the user.